Nvidia has announced a $2 billion investment in Marvell Technology, marking a significant step in its strategy to expand beyond GPUs and solidify its position at the center of the rapidly evolving artificial intelligence (AI) ecosystem.

This move highlights a broader industry shift: the competition is no longer limited to chip performance but increasingly focused on end-to-end AI infrastructure.

The Growing Importance of AI Infrastructure

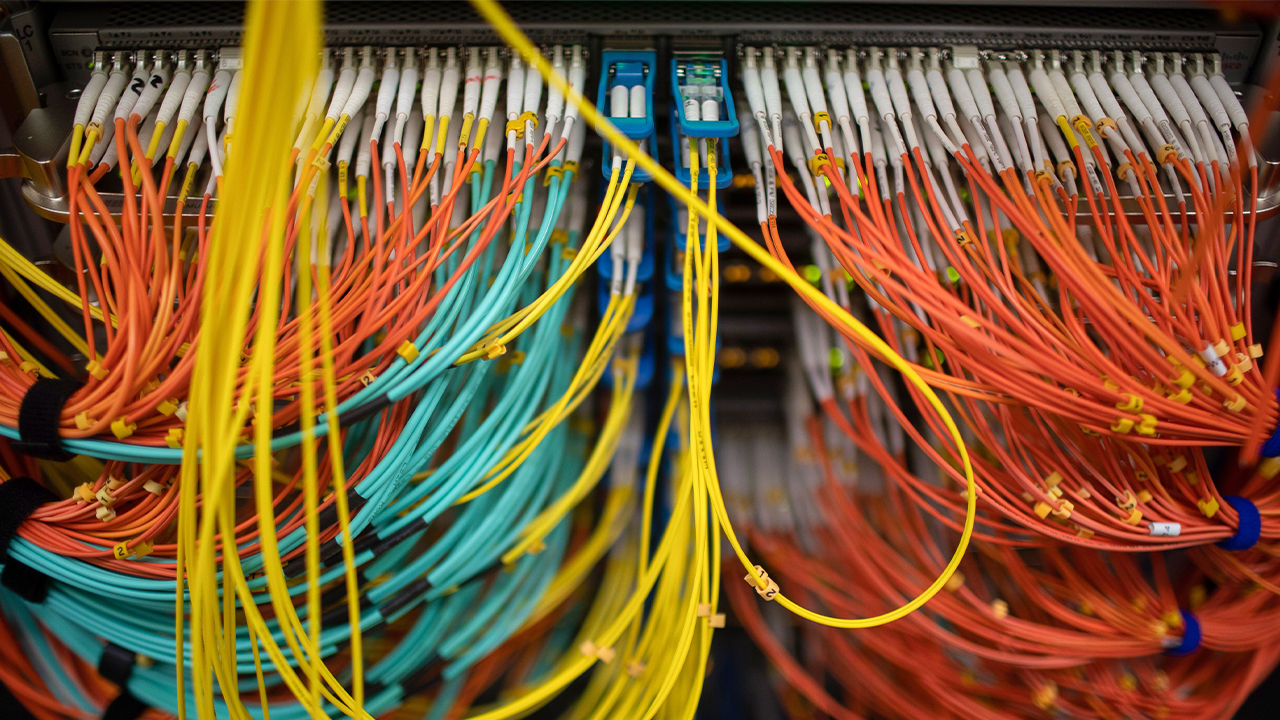

As AI adoption accelerates, the demands placed on data centers are becoming more complex. While compute power remains critical, data movement and system efficiency have emerged as key bottlenecks.

Modern AI workloads require:

- High-bandwidth communication between thousands of processors

- Low-latency networking across distributed systems

- Energy-efficient architectures to manage operational costs

This shift is driving increased demand for advanced networking technologies and custom silicon solutions.

Why Marvell Matters

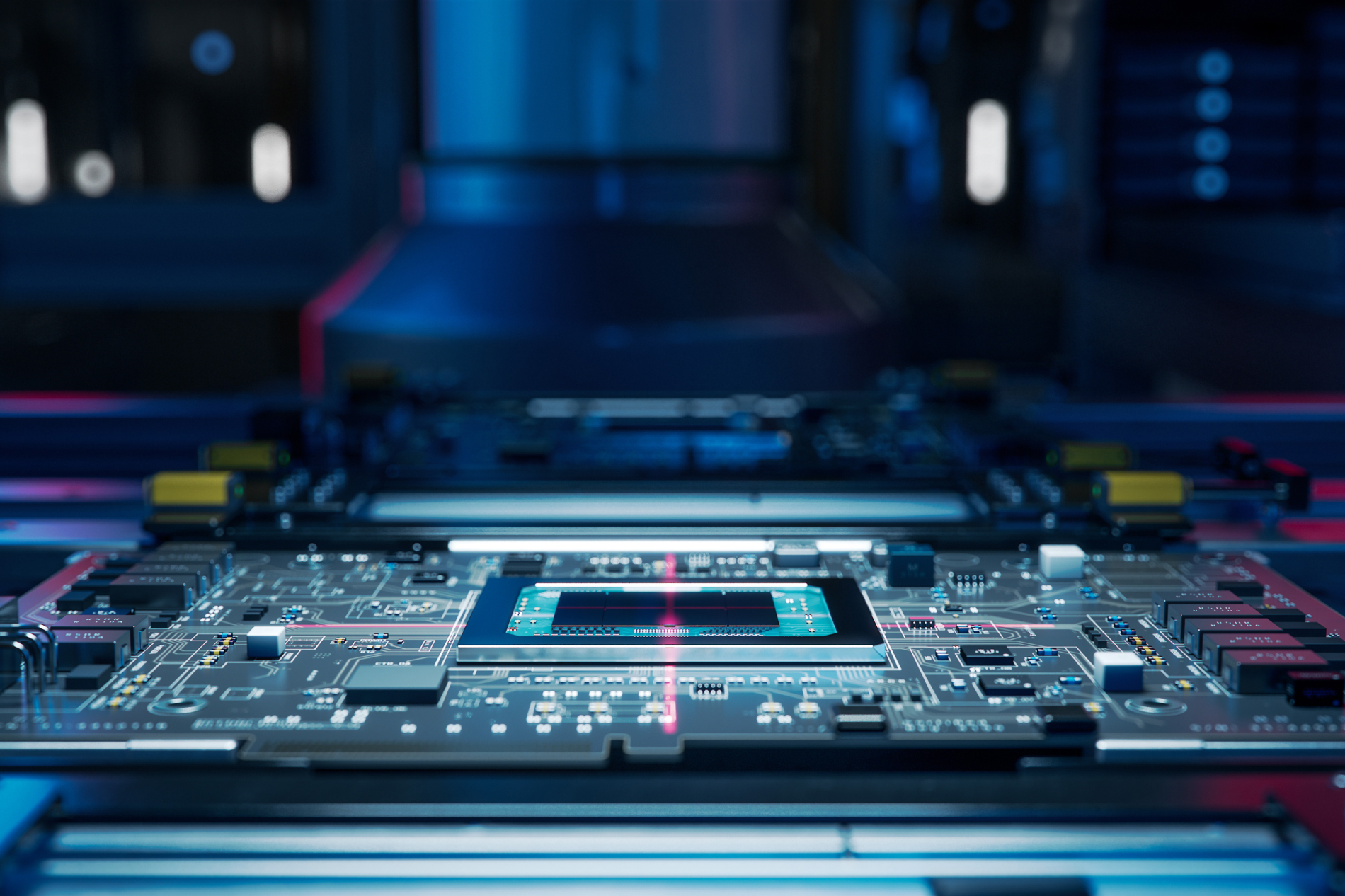

Marvell brings critical capabilities that complement Nvidia’s strengths in GPU computing.

Key areas of expertise include:

- Semi-custom silicon tailored to hyperscale customers

- Optical interconnect technologies for high-speed data transfer

- Silicon photonics, enabling faster communication with lower power consumption

By integrating these technologies, Nvidia can address one of the most pressing challenges in AI infrastructure: scaling performance without exponentially increasing energy usage.

Strategic Positioning Amid Rising Competition

Major technology companies such as Alphabet and Meta are increasingly investing in custom AI chips to reduce reliance on third-party suppliers.

This trend presents a structural challenge for Nvidia.

However, rather than competing directly with custom silicon initiatives, Nvidia is adapting its strategy:

- Expanding compatibility with third-party chips

- Strengthening its role in system-level architecture

- Positioning itself as the core infrastructure layer

This approach allows Nvidia to remain central to AI deployments, even as hardware diversification increases.

Technology Integration: NVLink and Optical Networking

The collaboration will focus on integrating Marvell’s networking solutions with Nvidia’s platform technologies, including NVLink Fusion.

Key components include:

- NVLink Fusion: Enables multiple processors to function as a unified system

- Optical interconnects: Improve speed and reduce power consumption in data transfer

- Networking ASICs: Support large-scale AI workloads across distributed systems

Together, these technologies enable more scalable and efficient AI infrastructure at the data center level.

Market Impact and Industry Outlook

The market reacted positively to the announcement, with Marvell shares rising approximately 7% and Nvidia gaining nearly 3%.

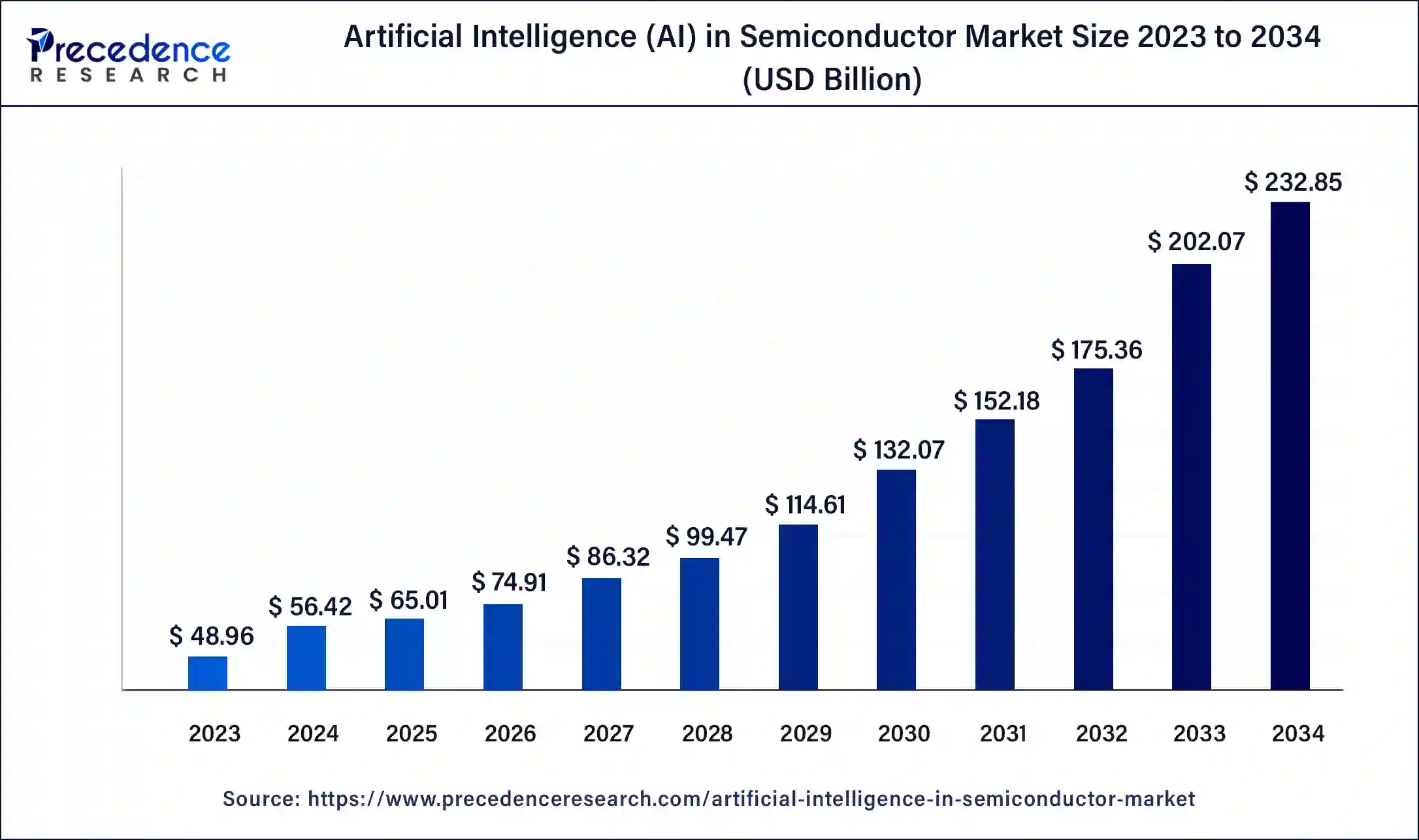

The broader context reinforces the significance of this investment. Technology companies are expected to spend over $630 billion on AI infrastructure this year, driving demand across:

- Semiconductors

- Networking equipment

- Data center expansion

Marvell has projected strong growth, with revenue expected to increase significantly and approach $15 billion.

Conclusion

Nvidia’s investment in Marvell reflects a critical evolution in the AI industry.

The competitive landscape is shifting from isolated hardware performance to integrated ecosystem control. Success will increasingly depend on the ability to deliver complete, scalable, and energy-efficient AI platforms.

By combining its computing leadership with Marvell’s networking and custom silicon expertise, Nvidia is positioning itself not just as a chip manufacturer, but as a foundational provider of AI infrastructure.