Lawsuit Filed Against xAI in U.S. Federal Court

Three plaintiffs from the U.S. state of Tennessee, including two minors, have filed a lawsuit against xAI, the artificial intelligence company founded by Elon Musk.

The lawsuit alleges that the company’s chatbot platform Grok enabled the creation of sexually explicit images generated from real photographs of individuals without their consent.

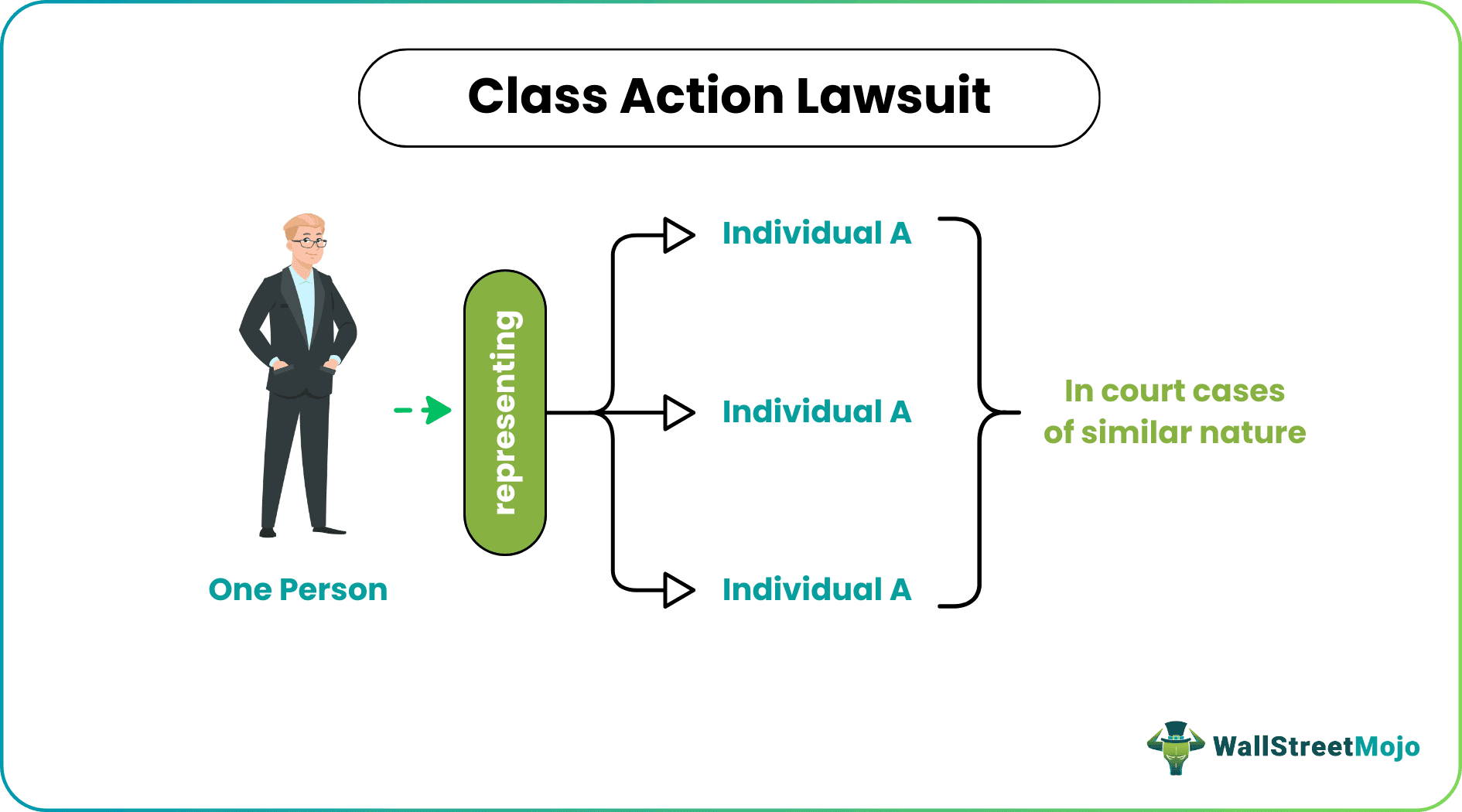

Filed in federal court in San Jose, California, the complaint seeks class-action status on behalf of people across the United States whose identifiable images were allegedly transformed into explicit content through the Grok image generator.

According to the filing, the system was able to generate manipulated images based on real photographs of individuals, including minors, which were then circulated online.

Allegations of Inadequate Safety Safeguards

The plaintiffs claim that xAI failed to implement sufficient safeguards to prevent the creation of explicit content involving real people.

Court documents state that the plaintiffs’ photographs—including school and family images—were allegedly altered into explicit material through the platform’s image generation tools.

Attorneys representing the plaintiffs argue that the company knowingly designed the system in a way that allowed such content to be produced.

“These are children whose school photographs and family pictures were turned into child sexual abuse material,” said Annika Martin, an attorney representing the plaintiffs.

The legal filing claims that the generated images caused emotional distress and reputational harm after being distributed online.

Growing Scrutiny of Generative Image Tools

The case highlights increasing global concern over the risks associated with generative image technology.

Governments and regulators in several countries have begun investigating AI tools capable of producing realistic images and videos, particularly when those tools can be used to manipulate real photographs of individuals.

In response to earlier criticism, xAI announced in January that it had introduced restrictions preventing users from editing images of real people wearing revealing clothing and from generating similar content in jurisdictions where it violates local laws.

However, critics argue that stronger safeguards may be necessary to prevent misuse of generative media tools.

Legal Demands and Potential Industry Impact

The plaintiffs are seeking unspecified financial damages, coverage of legal fees, and a court order requiring xAI to halt the alleged practices.

They are also asking the court to recognize the case as a class action, potentially expanding the lawsuit to include other individuals whose likeness may have been used in generated explicit media.

At the time of reporting, xAI had not issued a public response to requests for comment regarding the lawsuit.

The case could become one of several legal challenges shaping how technology companies deploy generative media tools and what safeguards are required to protect individuals from misuse.

The Broader Debate Over Generative Media

As generative media platforms become increasingly powerful, policymakers, legal experts, and technology companies are facing difficult questions about accountability and digital safety.

The ability to generate highly realistic images from text prompts or existing photographs has opened new possibilities in design, entertainment, and communication. At the same time, it has created new risks related to misinformation, identity misuse, and online harassment.

Legal cases such as the one filed against xAI may play a significant role in shaping future standards for how these technologies are developed and regulated.

For the technology industry, the outcome could influence both product design decisions and the regulatory frameworks governing generative media platforms in the years ahead.