Nvidia Expands Its Vision for the AI Chip Market

Nvidia has outlined an ambitious outlook for the artificial intelligence chip market, projecting that the total revenue opportunity for AI computing infrastructure could reach $1 trillion by 2027. The forecast marks a significant increase from the company’s previous estimate of roughly $500 billion through 2026, signaling accelerating demand for high-performance computing systems that support large-scale AI applications.

The announcement was made by Nvidia CEO Jensen Huang during the company’s annual GTC developer conference in San Jose, California—an event that has evolved into one of the most influential gatherings in the global technology industry.

The updated forecast highlights Nvidia’s belief that the rapid deployment of AI systems across industries will require enormous computing infrastructure, particularly as companies move beyond building AI models and toward delivering real-time services to millions of users.

A Strategic Shift Toward Inference Computing

For the past several years, most AI-related investment has focused on training large models, a process that requires enormous amounts of computing power. Nvidia’s graphics processors have dominated this segment of the market.

However, the next stage of the AI industry is expected to revolve around inference computing—the process of running trained models to answer user queries or perform tasks in real time.

This shift dramatically expands the scale of computing infrastructure required. Instead of training a model once, companies must now serve millions or even billions of daily interactions from users.

According to Huang, the rapid growth in AI-driven services is creating a new wave of demand for data center hardware capable of handling real-time workloads efficiently.

“The inference inflection has arrived,” Huang said during his keynote presentation. “Demand continues to grow as more organizations deploy AI systems in production environments.”

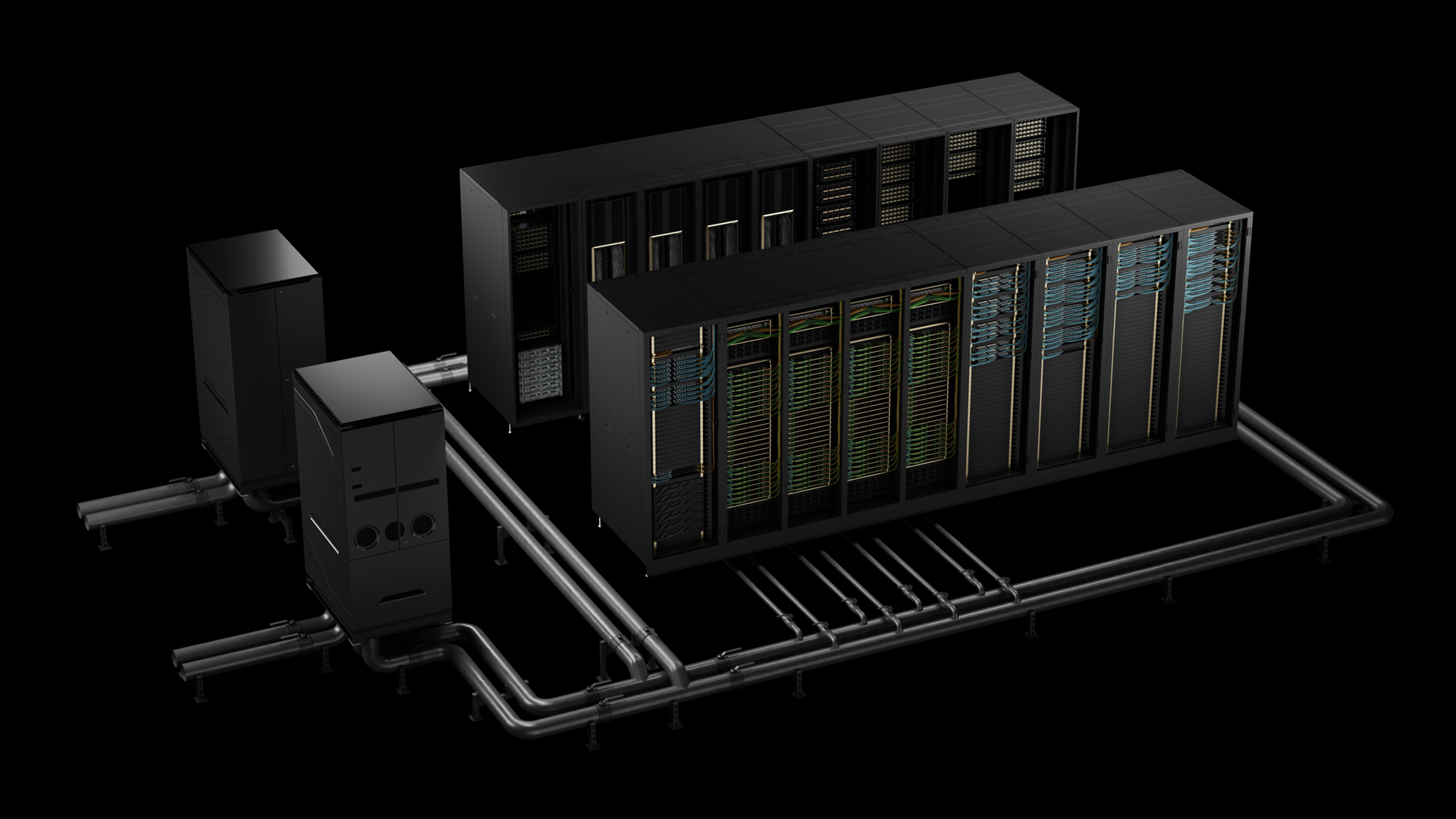

New Hardware Platforms for Data Center Infrastructure

To address this growing market, Nvidia introduced several new technologies designed specifically for large-scale inference computing.

Among the announcements was a new central processing unit (CPU) platform as well as a broader computing system built on technology from Groq, a chip startup whose architecture Nvidia licensed in a deal valued at approximately $17 billion.

The system is designed to divide inference workloads into two distinct stages:

• Prefill stage – where user input is converted into computational tokens that machines can process

• Decode stage – where the system generates the final response or output

In Nvidia’s architecture, the company’s Vera Rubin chips handle the initial prefill stage, while Groq-based processors accelerate the decoding process that produces final responses.

This hybrid approach aims to improve efficiency in large-scale AI deployments where response speed and power consumption are critical factors.

Increasing Competition in the AI Hardware Market

While Nvidia has established a dominant position in the market for training AI models, competition is intensifying in the infrastructure required to deploy those systems.

Major technology companies are developing alternative processors designed to handle inference workloads efficiently. Central processing units (CPUs) and specialized custom chips are increasingly being used alongside or instead of graphics processors in certain applications.

Companies such as OpenAI, Meta, and Anthropic—after investing heavily in training infrastructure—are now focused on scaling services to support millions of users interacting with AI systems daily.

This transition is also increasing demand for CPUs in data centers. According to Huang, Nvidia’s new CPU products are already gaining traction.

“We are selling a lot of CPU standalone,” Huang noted during the event, suggesting that the segment could quickly become a multi-billion-dollar business for the company.

Nvidia’s Long-Term Infrastructure Roadmap

Beyond its current hardware announcements, Nvidia also previewed elements of its longer-term computing roadmap.

The company highlighted an upcoming platform known as Feynman, expected to arrive later in the decade following the release of its Rubin Ultra processors. While technical details remain limited, the roadmap suggests a broader expansion of Nvidia’s integrated infrastructure approach, combining processors, networking systems, and data center platforms.

Industry analysts say the strategy reflects Nvidia’s evolution from a chip manufacturer into a full-scale provider of computing infrastructure.

Instead of simply releasing individual processors, the company increasingly delivers complete systems composed of multiple hardware layers designed to work together.

Market Reaction and Industry Outlook

Despite investor concerns about whether the massive spending on AI infrastructure can be sustained, Nvidia’s updated forecast helped reassure markets that demand for advanced computing systems remains strong.

Shares of the company rose modestly following the announcement, closing the day up around 1% after briefly climbing higher earlier in the session.

Industry analysts say the $1 trillion market estimate underscores the scale of investment expected across the AI infrastructure ecosystem.

As enterprises and technology platforms continue integrating AI into their services, the need for high-performance computing systems will likely expand rapidly over the next several years.

For Nvidia, maintaining leadership in this evolving market will depend not only on chip performance but also on the company’s ability to deliver integrated computing platforms capable of powering the next generation of large-scale digital services.